HDR vs SDR: Everything You Should Know About

Have you noticed that HDR has been popping up everywhere in your life at some point? On your mobile phone, 4K television, Netflix, Xbox and more. For most consumers, the first time they encountered the term HDR was probably when they took a picture with their cell phone. But I'm sure many users are still confused about HDR. What's HDR? How is it different from SDR? Is it worth upgrading to HDR? Follow this post to find out the details.

To Convert 4K 10-bit HDR to SDR Easily

Winxvideo AI - A super easy HDR to SDR converter with hardware accelerated. It enables users to:

- Convert HDR 4K HEVC 10-bit video to 4K/1080p SDR in whatever formats, be it MP4, H264, HEVC, MOV, MKV, AVI, FLV, WMV...

- Ensures the same depth, color brightness and saturation, without washed-out color, after the HDR to SDR conversion.

- Free adjust resolution 4K to 1080p/720p, 60FPS to 30FPS, aspect ratio 18:9/1:1 to 16:9 or vice versa...

- 47x real-time faster processing speed thanks to GPU hardware acceleration tech.

Table of Contents

Part 1. What's SDR and HDR?

SDR (Standard Dynamic Range) describes images or video using a conventional gamma curve signal. The conventional gamma curve was based on the limits of the cathode ray tube (CRT) which allows for a maximum luminance of 100 cd/m2.

HDR, the short of High Dynamic Range, is a dynamic range higher than usual. It's the next generation of color clarity and realism in image and video, which refers to the wider contrast or color range between the lightest and darkest tones in an image or video.

Part 2. HDR vs SDR: Is HDR Brighter than SDR?

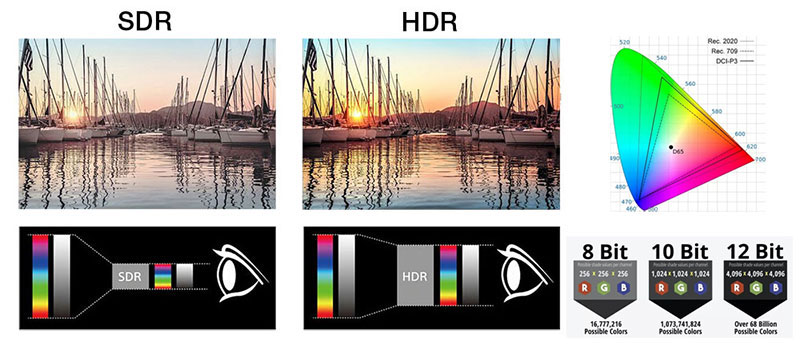

What's the difference between HDR and SDR? The biggest discrepancy lies in the range of color gamut and brightness. You know, SDR allows the color gamut of sRGB and the brightness from 0 to 100nits. Whereas HDR has a wider color range up to DCI - P3, brighter upper limit of brightness and darker lower limit of brightness. At the same time, it improves the overall image quality in terms of contrast, grayscale resolution and other dimensions, bringing more immersive experience to the experiencer.

To put it simply, HDR is brighter than SDR. HDR allows you to see more of the details and colors in scenes. HDR is superior in these aspects:

- Brightness: HDR allows brightness upper to 1000 nits and lower to under 1 nit.

- Color gamut: HDR usually adopts P3, and even Rec.2020 colour gamut. SDR uses Rec.709 in general.

- Color depth: HDR can be in 8-bit, 10-bit and 12-bit color depth. While SDR is usually in 8-bit, and very few use 10-bit.

Part 3. Types of HDR: HDR10, Dolby Vision & HDR10+

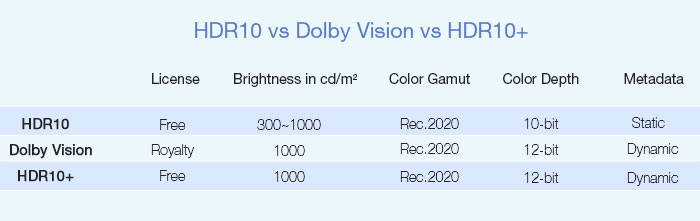

Actually, there is no final definition of HDR standards. It's a situation where all flowers bloom together, including HDR10, Dolby Vision and HDR10+ and more. Here, we'll go into the details of each of those.

HDR10

HDR10 is an open standard, and you don't have to pay any royalties to use it. The number "10" stands for 10bit color depth. In addition to this, HDR10 also recommends the use of wide gamut Rec.2020, 1000 nits of brightness, and static data processing mode.

HDR10 is the most common HDR standard that almost all the major TV manufacturers and streaming providers, such as Sony, Disney, 20th Century Fox, Warner Bros., Paramount, Universal, and Netflix adopt HDR10 to create 4K UHD Blu ray disks. Besides, devices like Xbox One, PS4, Apple TV also supports HDR10.

Dolby Vision

Dolby Vision is an HDR standard that requires monitors to have been specifically designed with a Dolby Vision hardware chip. There is a royalty fee of Dolby Vision, about $3 for each TV set. Like HDR10, Dolby Vision uses Rec.2020 wide color gamut, 1000 nits of brightness, but it adopts 12-bit color depth and supports dynamic data element structure.

HDR10+

HDR10+ is an HDR standard set by Samsung for Dolby Vision, which is equivalent to an evolutionary Vision of HDR10. Similar to Dolby Vision, HDR10+ supports dynamic data element structure, but HDR10+ is an open standard, aiming to get a better audio-visual experience at a lower price.

Part 4. Is Your Setup Capable of Playing HDR?

Well, after a preliminary understanding of the difference between SDR and HDR, another issue that users are concerned about is HDR video playback. Is your setup capable of playing HDR? Where can you get HDR contents?

Actually, to play 4K HDR contents, you'd better meet these requirements:

1. Get HDR Contents

In terms of streaming, Netflix and Amazon Prime support HDR on Windows 10. As for other HDR contents, Sony, Disney, 20th Century Fox, Warner Bros., Paramount, Universal, and Netflix all use HDR10 to create 4K UHD Blu ray contents in discs. Or you can record your own 4K HDR contents with mobile, GoPro, DJI, camcorder and more.

2. Make Sure Your Monitor Support HDR

When you opt for an HDR 4K monitor, always know that brighter is better, backlight dimming type is crucial, the more DCI-P3 coverage, the better. Monitors like Asus ROG Swift PG27UQ, Acer Predator X27, Alienware AW5520QF are all good choices.

3. Make Sure Your Display Port is Compatible with HDR

HDR can be displayed over HDMI 2.0 and DisplayPort 1.3. If your GPU has either of these ports, it should have an access for HDR contents. As a rule of thumb, all Nvidia 9xx series GPUs and newer have an HDMI 2.0 port, as do all AMD cards from 2016 onward.

You see, HDR is being adopted by the general market with remarkable ease and speed. We're quite sure that HDR will be popular in the coming future. If you're not constrained by money, and have a high desire for video quality, it's really worth upgrading to HDR.